AI for In-House Legal Teams: Building the Foundation for AI Adoption

AI adoption is at the top of the agenda for most in-house legal teams. But far fewer are thinking about what needs to be in place first. While our first post in this series covered the risks of unstructured AI adoption, we now turn to that question, building a foundation that makes AI trustworthy, defensible and genuinely useful.

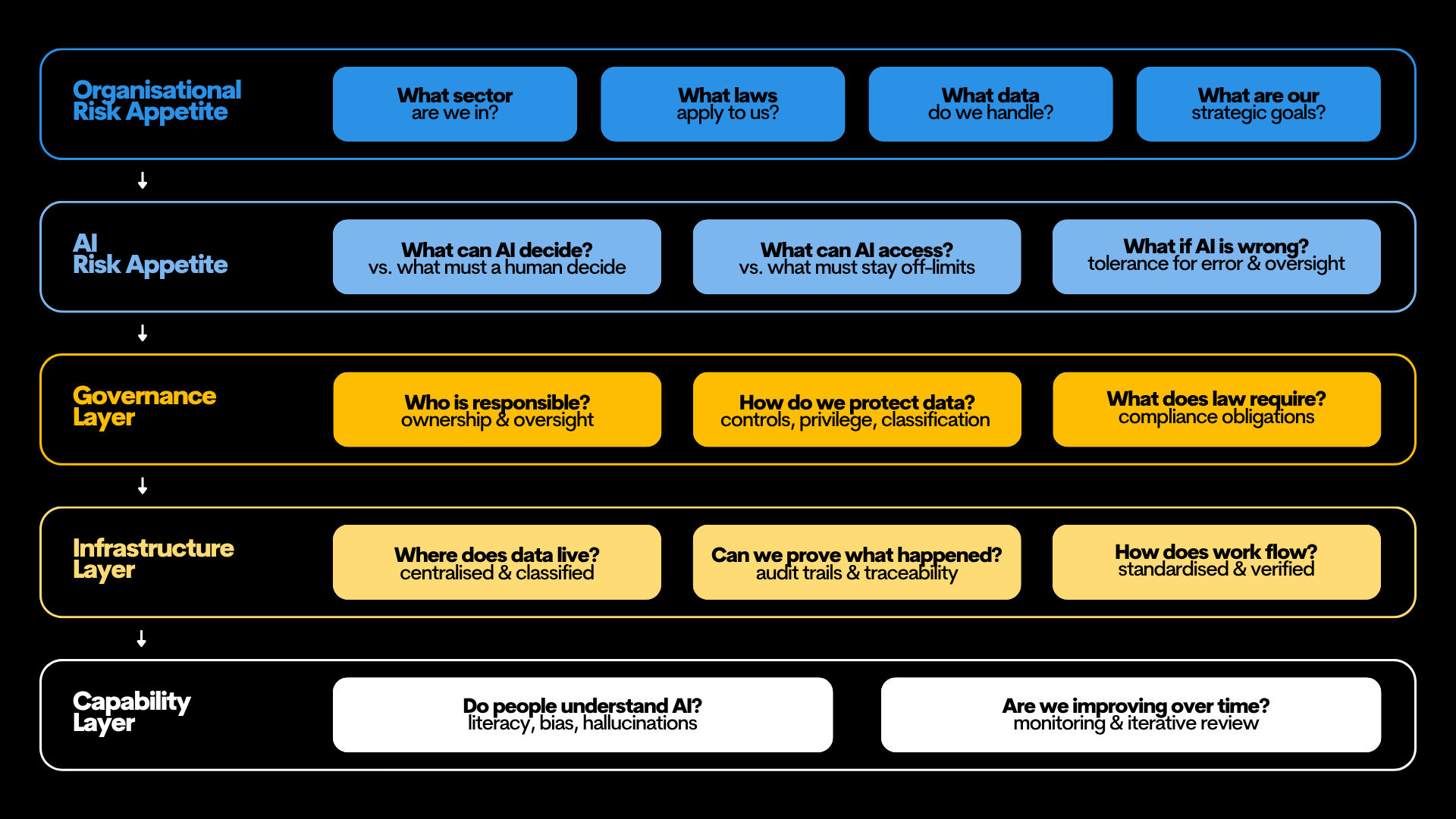

The Framework: A Five-Level Hierarchy

Getting AI right is not about having one policy in place. It requires a framework built across five interconnected levels, where each one reinforces the next.

The framework for reliable AI adoption for in-house legal teams

1. Organisational Risk Appetite

Before an AI programme can be governed, the legal team must align with the organisation’s existing risk posture. Most organisations already have these answers; the discipline is making them explicit in the context of AI.

Four factors shape this baseline:

● Sector Dynamics: Are you in a highly regulated field like banking or healthcare?

● Legal Landscape: Which jurisdictional laws (such as the Privacy Act 1988) create the "no-go" zones?

● Data Sensitivity: What is the nature of the information you handle daily?

● Strategic Goals: Is the organisation a first-mover or a cautious follower?

2. AI Risk Appetite

Once the organisational position is clear, it must be translated into AI-specific parameters. Leaders must resolve three critical questions before deployment:

1. What can AI decide vs what must a human decide? Legal teams need a clear position on where AI can recommend or assist and where a human must make the final call without exception.

2. What can AI access vs what must stay off-limits? The sensitivity of legal data means this carries professional consequences. This answer shapes every data control that follows.

3. What if AI is wrong? The question is not if errors will occur, but what the tolerance level is and what oversight exists to catch them before they cause harm.

Note: Setting this appetite requires AI literacy at the leadership level. Decisions made without understanding concepts like "explainability" risk being either dangerously permissive or needlessly restrictive.

3. Governance Layer

Governance provides the "rules of the road." Clear answers here ensure that infrastructure investments lead to consistent and defensible outcomes.

1. Who is responsible? Every AI output should have a designated human owner: someone who validates that the work is accurate and properly applied. This ensures that AI recommendations are always backed by human expertise, providing a clear basis for any future review or internal audit.

2. How do we protect data? Protecting sensitive information is vital. By setting clear rules, legal teams can ensure that confidential advice stays protected and that records of AI use are managed safely as part of the company's overall information strategy.

3. What does the law require? While Australia lacks a standalone "AI Law", existing frameworks (such as the Privacy Act 1988, ACL and Director’s Duties) apply through an AI lens.

4. Infrastructure Layer

If governance sets the rules, infrastructure is what makes those rules operational. Whether you use dedicated legal AI platforms or general-purpose tools, your infrastructure must be designed to turn your policies into practical controls.

To turn these policies into a functioning reality, the infrastructure needs to address three key areas:

1. Where does data live? AI is only as reliable as its source. Contract and matter information scattered across shared drives produce inconsistent results. Centralisation with standardised classification is the foundation of accuracy.

2. Can we prove what happened? AI outputs must be defensible. This requires audit trails for document changes and ensuring every recommendation is attributable to the individual who verified it.

3. How does work flow? Structured workflows create natural checkpoints. By mapping the lifecycle of a contract and embedding verification steps, teams can scale AI usage without scaling operational risk.

5. Capability Layer

The real power of AI comes when people have the skills to use it effectively.

● Critical Interpretation: Staff must be trained to recognise hallucinations, bias and model drift. Knowing when not to trust an output is as vital as knowing how to prompt the tool.

● Iterative Review: The foundation is not a "set and forget" project. It requires periodic auditing of AI outputs and refinement of workflows to address emerging risks. This ensures the human stays in the loop as a genuine check, not a formality.

Reliable AI, Real Possibilities

Good data governance is the difference between AI that creates risk and AI that creates value. When a legal team knows what data it holds and how it is protected, they can act with confidence rather than caution. The five levels outlined above are the operating conditions that allow AI to perform reliably and legal teams to stand behind what it produces.

This is the second post in a three-part blog series on how in-house legal teams can use AI safely and effectively across their legal workflows. In our final post, we explore the art of the possible, what AI can do for legal teams once the foundation is right, from intelligent automation to strategic decision support.

Ready to build your foundation? Platforms like Cubed by Law Squared are designed to bring your contracts and matters into one governed environment, ensuring that when you apply AI, it is working with data you can trust.